- Blog

- Ad infinitum review

- Queue anylogic enable preemption

- Mirror one and all concert dvd

- Link tables in idatabase

- Wii sports resort bowling red button

- Otomatic feat- etc etc original mix

- Samplism for windows

- Flow chart templates for pages

- I dunno smiley

- Docker sudo

- Forklift rental service

- Dead space 3 torrent games

- Prodeus gog

#Queue anylogic enable preemption free#

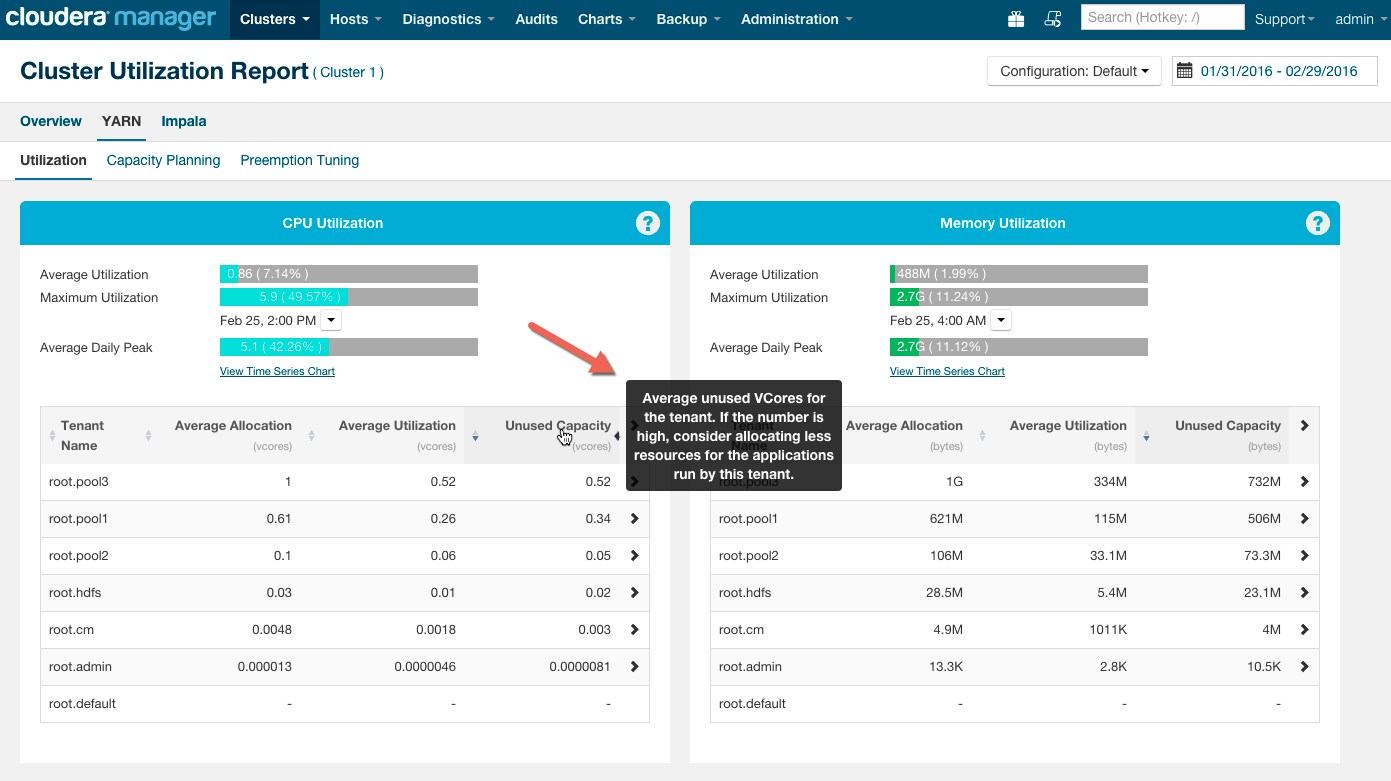

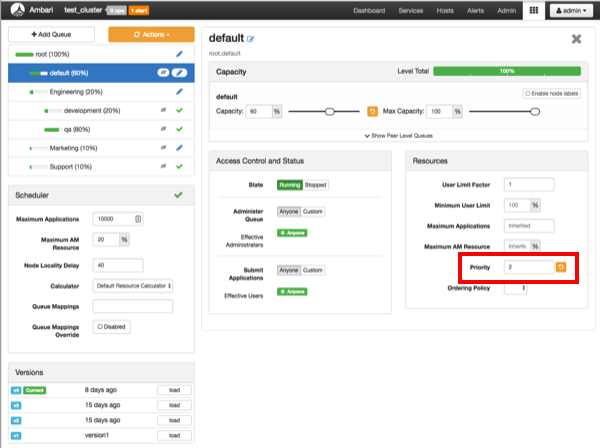

This is a way for ApplicationMasters to do a second level pass (similar to scheduling) on what containers for the right set of resources to free up. More details on what information AMs obtain and how they can act on it is described in the section “Impact of preemption on applications” below. Instead of killing thus-marked containers immediately to free resources, PreemptionMonitor inside the ResourceManager notifies ApplicationMasters (AM) so that AMs can take advanced actions before ResourceManager itself commits a hard decision. Preemption Step #2: Notifying ApplicationMasters so that they can take actions We will explore internal algorithms of how we select containers to be preempted in a separate detailed post shortly. As a result, it arrives at a list of containers to be preempted, as demonstrated below:įor example, containers C5, C6, C7 from App1 and containers C2 and C3 from App2 are marked to-be-preempted. The PreemptionMonitor checks queues’ status every X seconds, and figures out how many resources need to be taken back from each queue / application so as to fulfil needs of under-satisfied queues. Preemption Step #1: Get containers to-be-preempted from over-used queues We now describe the steps involved in the preemption process that tries to balance back the resource utilization across queues over time. Two applications are running in queue-A and one in each of queues B and C. Queue-C is under satisfied and asking for more resources. Queues A and B have already used resources more than their configured minimum capacities. Let’s say that we have 3 queues in the cluster with 4 applications running. Below is an example queue state in a cluster:

We will now explain how preemption works in practice taking an example situation occurring in a Hadoop YARN cluster. The regular scheduling itself happens in a thread different from the PreemptionMonitor. It runs in a separate thread making relatively slow passes to make decisions on when to rebalance queues. A component called PreemptionMonitor in ResourceManager is responsible for performing preemption when needed. How preemption worksĪll the information related to queues is tracked by the ResourceManager. Support for preemption is a way to respect elasticity and SLAs together: when no resources are unused in a cluster, and some under-satisfied queues ask for new resources, the cluster will take back resources from queues that are consuming more than their configured capacities. Due to this, high delay of applications in queue-B will be expected, there isn’t any way we can meet SLAs of applications being submitted in queue-B in this situation. queue-B will now have to wait for a while before queue-A relinquishes resources it is currently using. At the same time, say another queue, queue-B, currently under-satisfied starts asking for more resources. Without preemption, let’s say queue-A uses more resources than its configured capacity taking advantage of the elasticity feature of CapacityScheduler. To enable elasticity in a shared cluster, CapacityScheduler can allow queues to use more resources than their configured capacities when a cluster has idle resources. As shown below, queueA has 20% share of the cluster, queue-B has 30% and queue-C has 50%, sum of them equals to 100%. Sum of capacities of all the leaf-queues under a parent queue at any level is equal to 100%. In Hadoop YARN’s Capacity Scheduler, resources are shared by setting capacities on a hierarchy of queues.Ī queue’s configured capacity ensures the minimum resources it can get from ResourceManager. See the introductory post to understand the context around all the new features for diverse workloads as part of Apache Hadoop YARN in HDP.

#Queue anylogic enable preemption series#

This is the 4th post in a series that explores the theme of enabling diverse workloads in YARN. Mayank Bansal, of EBay, is a guest contributing author of this collaborative blog.